Misinformation and fake news make it difficult for farmers to identify trustworthy information. With more and more farmers using social media, identifying and reporting misinformation becomes important. In this blog, Aditya and Bhuvana discuss the communication strategies to tackle the menace of misinformation in agriculture.

CONTEXT

In this era of information deluge, thanks to social media and internet usage, it becomes important for scientists to communicate their research to the common public in ways that are easy to understand. This is even more important in the case of applied sciences like Agriculture, where the end users of research are mostly farmers.

Extension personnel have a dual role to play here: one is to communicate the important information to farmers in a way they understand (Box 1), and the second is to help farmers to identify and deal with misinformation (Box 2).

| Box 1: Making sense of the information Anyone who has a smart phone now can access weather forecasts on an hourly basis. However, how many of us can make sense of the forecast? What does 40% chance of rain for a particular day mean? Does it mean it will rain slightly? Does it indicate that the area will receive 40% of the average daily rain of the region? The true interpretation depends on the level of confidence in the forecast and the probability. But do people really understand the odds/probability? Sadly, the proportion of such people is low. Science communication needs to provide information in a way which is easier to understand. |

Moving on to the second role, a farmer needs support to be able to identify the misinformation or non-transparent communication messages that are being communicated to him/her, verify these, and enable him/her to understand the real meaning by providing a complete picture. Misinformation is different from fake news. Fake news is something totally wrong/unproved, it is a hypothesis regarding any issue with no facts backing it, whereas, misinformation is when the correct facts and figures are used to highlight only a part of the story or to project a wrong narrative by selectively presenting the facts and hiding other things which doesn’t fit the story. Misinformation is more dangerous than fake news, as it is difficult to identify and verify. Though misinformation is common in politics, it is also permeating into other fields including Agriculture.

Professor Joe Schwarcz, Director of the McGill Office for Science and Society in Montreal, Canada, in an interview[1] highlighted how a lot of misinformation is being spread about pesticide use in agriculture. On one hand, the pro-environmentalist group cherry pick facts and figures to project how dangerous pesticide use can be, without revealing that these effects emerge only when pesticides are used unscrupulously/inappropriately. On the other hand, pesticide manufacturers exaggerate the effectiveness of chemicals in reducing risk, while not highlighting environmental risks.

| Box 2: Non-transparent communication A pesticide manufacturer claims that by using the new seed treatment formulae, there is 25% reduction in disease incidence. This gives an impression that the new chemical is very effective. However, we cannot come to this conclusion, unless we understand the base rate effect. Suppose, without the seed treatment, there were 4 cases out of 100, and after using the chemical, the incidence of disease was 3 per 100. When you express this change in %, (4-3/4) * 100 = 25%. This is a case of non-transparent communication and a case of misinformation. It doesn’t make economic sense to use the chemical when the rate of incidence of the disease is so little, however, the effectiveness of the chemical is communicated in such a way that it persuades farmers to use it. |

Similarly, there is a lot of misinformation regarding organic agriculture, natural farming, zero budget farming and many more. There are stories and articles about how these technologies can be game changers. But how many of these are based on solid empirical evidence? As a scientific fraternity, have we tried to get to the root of these questions? Also, once a person starts reading stories about these on social media, they are more likely to be drawn to many more such, due to the similarity-finding algorithms embedded in social media. Repeatedly reading similar kinds of stories reinforces these beliefs. We have highlighted this issue in one of our earlier blogs too.[2]

CAN WE HAVE ALGORITHMS TO IDENTIFY MISINFORMATION?

Unfortunately, no. The only way out is to scrutinize information based on the evidence and principles of science, which machine learning or algorithms can’t do. The problem here is that the concept of truth is fuzzy; there is no clear distinction between what is truth and what is not, and hence classification algorithms from machine learning cannot function[3](Additional reading – Prof. David Rand of MIT Sloan has authored some interesting papers on the issue of fake news and misinformation[4]). So, the agriculture knowledge information system, especially in the public sector, has to play a key role in vetting agriculture-related information that comes up either in mainstream media or social media. We can take a cue from existing private organizations like ‘Snopes.com’, Fact Checker, Hoax slayer and many other groups engaged in identifying fake news and misinformation.[5] Can the public extension system remain unbiased and neutral and critically evaluate information? This is easier said than done.

First step is to identify the most believed but dangerous misinformation that are being circulated widely. Keeping a close watch on social media and mainstream media about agriculture-related information is critical. Also, WhatsApp groups where farmers share information can be a good way of tracking what information is being shared. Next step would be identifying the authenticity of the information. There are no hard and fast rules here and we call it both an art and a science. What do we mean by ‘Evidence’ is a question which has no simple answer! Whatever published in a journal is not necessarily true. One has to examine what the paper reports as well as the things that it doesn’t report. Also, is there a conflict of interest? Who has funded the study? Has the scientific principles of experimentation been rigorously followed? Do conclusions directly stem from the design and the results? Does it align with the established scientific principles? Answers to all these questions determine what constitutes ‘evidence’.

One of the most important roles of an extension professional is to provide complete information to farmers and help them make the right decision. But, for this communication needs to be transparent, and he/she should present an unbiased version of the topic including the pros and cons of an intervention so as to enable farmers to make informed decisions. However, this is not the case very often when scientists and extensionists talk about a technology, input, or a policy which is promoted and endorsed by the government. Since the policy has been promoted by the government, they are rarely debated – based on evidence – and is simply promoted widely, which is obviously not good for the science. (Related reading: Nandita and Sreekumar have discussed these issues in promotion of Zero Budget Natural Farming without scientific evidence in AESA Blog 142.) This equates to the scientific fraternity and the extension system playing an active role in generating and spreading misinformation! The direct negative effect of it is that the people lose ‘trust’ in both science and the extension system.

Once the misinformation is verified, communicate the complete story in clear, easily understandable language. Important points to consider while communicating scientific findings, including empirical findings, are highlighted below. Remember, providing empirical evidence in terms of numbers is important, though the information needs to be simplified.

SOME RULES OF THUMB IN COMMUNICATING EMPRICAL FINDINGS TO COMMON PEOPLE

a) Understanding probabilities

People usually have difficulty in understanding probabilities (Konold 1995), and simplify them as odds or chances (as discussed in Box 1). The research in this area indicates that when the numerical probability is provided with verbal probability, i.e., verbal translation in the form of text, such as ‘likely’, ‘very likely’ and so on, it increases general comprehension (National Academy of Sciences 2017; Wintle et al. 2019).

b) Exercise caution while using relative risks

When expressing relative risk (comparative change in risk due to a treatment, as in Box 2), never use percentage or Relative Risk (RR). It is better to use natural frequencies in this case. One classic example of this risk comes from the field of medicine. In 1995, there was a news that new oral contraceptives have two-fold higher risk of venous thromboembolism compared to the older products. People panicked, oral contraceptive use decreased with a consequence of higher abortions and higher rate of birth. But what constituted the two-fold increase? The absolute risk of venous thromboembolism in case of old product was 1 in 7000 and that of new contraceptives was 2 out of 7000! So, when only relative risk is used, it can misrepresent the facts (Fischhoff 2012).

c) Use the same denominator while presenting comparisons

Whenever percentages are given, also provide the frequency with it. And when providing different frequencies like A, B, C and D, use the same denominators (per 100 or per 1000). For example, if in treatment A, the disease incidence is 40 per 200 plants and in treatment B, the disease incidence is 30 per 180 plants, it is often difficult for people to make sense of these numbers. Instead, the numbers can be expressed as 20 per 100 and 17 per 100. Another related instance is how we are communicating Covid-related statistics. If you look at the official communications, whenever we are reporting the number of infections and number of deaths due to Covid, it is reported in percentages. (Example – less than 2% of Indians are infected by Covid-19.) Whereas, while reporting on vaccination, we use absolute numbers. (17 million doses of vaccines administered.) Therefore, transparent communication should make comparison easy and as far as possible use the same metrics while reporting.

d) Less is more

In Behavioural Economics, it is said that less is more (Peters et al. 2007). Many studies have proved that even in behaviour change communication, it is often beneficial to provide only the important information rather than overloading with too many trivial details.

e) Framing Effect

Use ‘framing effect’, prospect theory and other behavioural science tools to promote the desired behavioural change. According to prospect theory, humans have a tendency to associate higher value to losses than gains. Framing effect postulates that how information is presented determines how people make choices based on that information (Tversky & Kahneman 1985). For example, using gloves and protective wear while spraying pesticide could save future health expenditure of INR 10,000, and you need to communicate this information. It is said that communication will be more effective if the same information can be reframed as ‘by not using gloves and mask, you will lose INR 10,000 on future health expenses’.

f) Using appropriate time frames

It is important to use appropriate time frames. Farmers are more likely to be convinced of the importance of water conservation, when you communicate the risk of over extraction over a 10-year period than by explaining the short term or immediate risks of water overuse.

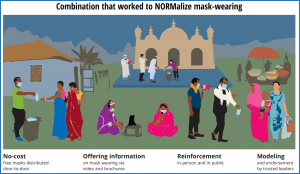

g) Graphical presentations and illustrations

Illustrations are always helpful in conveying the message in a more effective way. Take a look at this amazing illustration based on Randomized Control Trial (RCT) by JPAL and other collaborators (Abaluck et al. 2021). The RCT examined different interventions that can induce people to wear masks in 600 selected villages of Bangladesh. The results of the study indicated that this combination of strategy was most effective in normalizing mask wearing. The authors used the illustration to communicate the results, which can be easily understood by all (Figure 1).

Figure 1. Illustration to communicate results of RCT on interventions to normalize mask wearing in Bangladesh

Figure 1. Illustration to communicate results of RCT on interventions to normalize mask wearing in Bangladesh

CONCLUSION

With greater access to information, the goal post of agricultural extension has to now shift to ‘ensuring farmers have access to the right kind of information’ which empower them to make ‘informed decisions’. Misinformation and false news can damage the credibility of science and hamper the efforts of extension professionals. As Desmond Tutu says “If you are neutral in situations of injustice, you have chosen the side of the oppressor. If an elephant has its foot on the tail of a mouse and you say that you are neutral, the mouse will not appreciate your neutrality.” Similarly, being silent when misinformation is being spread is as good as spreading the misinformation, which erodes the credibility of science. Extension professionals and scientists should learn the skill of identifying misinformation, stop it from being shared, and quell it before it reaches a large number of people. It is important to understand how this type of communication is different from scientific communication and what principles to be used. In this blog, we have tried to list a few tips, which can be helpful in communicating the results of empirical research to common people. This is by no means an exhaustive set of principles and are only an indicative set of tools. It is high time these issues find a place in the curricula of undergraduate and postgraduate degree programmes in agriculture.

REFERENCES

Abaluck J, Kwong LH, Styczynski A, Haque A, Kabir MA, Bates-Jefferys E … and Mobarak AM. 2021. Normalizing community mask-wearing: A cluster randomized trial in Bangladesh (No. w28734). National Bureau of Economic Research.

Fischhoff B. 2012. Communicating risks and benefits: An evidence based user’s guide. Rome: FAO.

Konold C. 1995. Issues in assessing conceptual understanding in probability and statistics. Journal of statistics education 3(1).

National Academies of Sciences, Engineering, and Medicine. 2017. Communicating science effectively: A research agenda. National Academies Press.

Peters E, Dieckmann N, Dixon A, Hibbard JH and Mertz CK. 2007. Less is more in presenting quality information to consumers. Medical Care Research and Review 64(2):169-190.

Tversky A and Kahneman D. 1981. The framing of decisions and the psychology of choice. Science 211(4481):453-458.

Tversky A and Kahneman D. 1985. The framing of decisions and the psychology of choice. Behavioral Decision Making 25-41. doi:10.1007/978-1-4613-2391-4_2

Wintle BC, Fraser H, Wills BC, Nicholson AE and Fidler F. 2019. Verbal probabilities: Very likely to be somewhat more confusing than numbers. PloS One, 14(4):e0213522.

FOOTNOTES

- Here’s What a Chance of Rain Really Means | Latest Science News and Articles | Discovery

- (45) Fake news, misinformation and disinformation in agriculture – YouTube

- Blog 134- From UTOPIA to DYSTOPIA: Social Media as the Future of Agricultural Extension | | Welcome to AESA (aesanetwork.org)

- (45) Understanding and Reducing the Spread of Misinformation Online – YouTube

- David G. Rand | MIT Sloan

- Watchdog Groups – News: Finding News and Identifying “Fake” News – Research Guides at Stetson University

- Normalizing Community Mask-Wearing: Evidence from a Randomized Evaluation in Bangladesh | Innovations for Poverty Action (poverty-action.org)

Aditya KS is a scientist at Division of Agricultural Economics, ICAR – Indian Agricultural Research Institute, New Delhi. You can find him at his blog adityarao.wordpress.com. To get in touch, email at adityaag68@gmail.com.

Aditya KS is a scientist at Division of Agricultural Economics, ICAR – Indian Agricultural Research Institute, New Delhi. You can find him at his blog adityarao.wordpress.com. To get in touch, email at adityaag68@gmail.com.

Bhuvana is working as a consultant at Centre for Research on Innovation and Science Policy, Hyderabad. She can be reached at bhuvana.rao7@gmail.com.

Bhuvana is working as a consultant at Centre for Research on Innovation and Science Policy, Hyderabad. She can be reached at bhuvana.rao7@gmail.com.

Very nicely written blog on science communication with implications to all extension stakeholders. Congratulations to both the authors and thanks to AESA for publishing this blog

Excellent write-up. Time demanding topic.

Very relevant article

This blog can be read best against the context of the present pandemic situation. The spread of COVID-19 also resulted in the wide spread of avalanche of misinformation, known as Infodemic. mainly through various social media platforms. A lot of misinformation and fake news is being propagated in connection with the cause and cure of the disease. WHO has launched a number of initiatives to check this infodemic such as training health officials on infodemic management https://www.who.int/…/call-for-applicants-for-2nd-who…, conducting global conferences to create more awareness https://www.who.int/…/who-global-conference-on… and generating evidence on better practices for the same purpose https://www.who.int/…/call-for-submissions-innovative…. There will be many lessons for the extension practitioners to learn from such interventions to deal with infodemic in agriculture. I would like to profusely thank the authors for throwing light on an important topic and on the art and science of drafting an interesting blog!

“I read the blog of Aditya and Bhuvana with great interest. Excellent read. Congrats to the authors.

The essence of the blog is the need to ensure information quality which is defined as ‘the fitness for use by the end-users of the information provided’. A lot of information is given in terms of quantity (referred to as information load by the authors). However, quality of information is a challenge which needs to be addressed. The authors have tried to address this issue. In this context, I suggest the following book for further reading, which will be useful to the young researchers. The book covers dimensions of information quality and also deals with assessing the quality of data.

Ron S Kenett and Galit Shmueli, 2016 Information Quality: The potential of data and analytics to generate knowledge

John Wiley & Sons.”

The authors are known for their creative Blogs addressing pressing problems in agricultural development. This Blog is yet another attempt to effectively flag and explore ways and means to overcome the pitfalls of information deluge affecting famers in taking farming decisions. The tips and food for thought for further works in the area are very useful and hope follow ups will be further useful to address this issue satisfactorily. Good Blog, well written, Congratulations! Keep it up!

This topic is of great relevance to present day context. With social media brimming with clickbaits for the socially anxious, the chances for society to be misinformed are high. There was an interesting article on Psychological inoculation theory and how it can be used to prevent misinformations and fake news from spreading. (Link to the article- Inoculating against fake news about COVID-19 -https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7644779/) It is analogous to the medical vaccination process, wherein messages in the form of weak misinformations are designed and delivered to the people. According to this theory, exposure to such messages, will help people develop a resistance to stronger misinformations and fake news. This may be just one theory, but exploring and practically applying such theories to finding field level solutions can increase the credibility of our discipline.

Both the blogs were excellent reads and I thank the authors for providing insights into a very relevant and interesting topic.

“Thanks to the Drs Aditya and Bhuvana for coming out with useful tips to mitigate the spread of misinformation or misleading information. Most often the scientists themselves are involved in communicating misinformation thus eroding the credibility of the scientists. We have seen how our scientists highlight the advantages of a technology which they want to promote without indicating its disadvantages or the conditions necessary for its application ( ex. Crossbred animals).

Quite often several agencies including public sector organizations publish success stories to impress upon the farmers to adopt technologies without indicating that there are several failure stories. We must understand that failure stories do provide valuable information about the reasons for failure just like the dead animal gives useful information when necropsy is conducted on it.

The extension personnel especially the public sector agencies must desist in publishing such unique stories the success of which depends not only on the technology but also upon several factors. As pointed out by the authors that it is better for the extension personnel to give the complete information to the farmers (rather than suppressing the negative information) to enable them to take decisions rather than we sell the decisions.

Thanks to AESA also for publishing this informative blog.”